The fact that this algorithm is both conceptually simple and provably useful is rather extraordinary to me and is why Huffman encoding will always hold a special place in my heart. This will create a codebook that looks like this: CharacterĪnd bibbity_bobbity becomes 01000010010111011110111000100101110.Īs mentioned this uses the minimum number of bits possible for encoding. Here's an image of what this might look like for the phrase bibbity_bobbity: Keep all codewords and put them into your final set of codewords (sometimes called a codebook) Read the tree backwards from the root node and concatenate the final bitstring codeword.Keep doing step 2 until the tree is complete.Add the smallest two values together to create a new node with a new frequency.Be sure to keep track of the frequencies, too! Order all characters according to the frequency they appear in the input bitstring, with the most frequent character at the top of the list.Well, here we build it from the bottom up like so: So now the question is: how do we create a binary tree? The idea is somewhat straightforward in principle, but a little difficult to code in practice.īy creating a binary tree of the input alphabet, every branch can be provided a unique bit representation simply by assigning a binary value to each child and reading to a character in a leaf node if starting from the root node. Huffman encoding ensures that our encoded bitstring is as small as possible without losing any information.īecause it is both lossless and guarantees the smallest possible bit length, it outright replaces both Shannon and Shannon-Fano encoding in most cases, which is a little weird because the method was devised while Huffman was taking a course from Fano, himself! We have a string that we want to encode into bits. Huffman encoding follows from the problem described in the Data Compression section. I have since accepted that fact and moved on. It was in that moment, I knew I would never amount to anything. He managed to rip the heart out of the methods described by leaders of the field and create a data compression method that was easier to understand and implement, while also providing more robust results, and apparently this was all done for a school project!

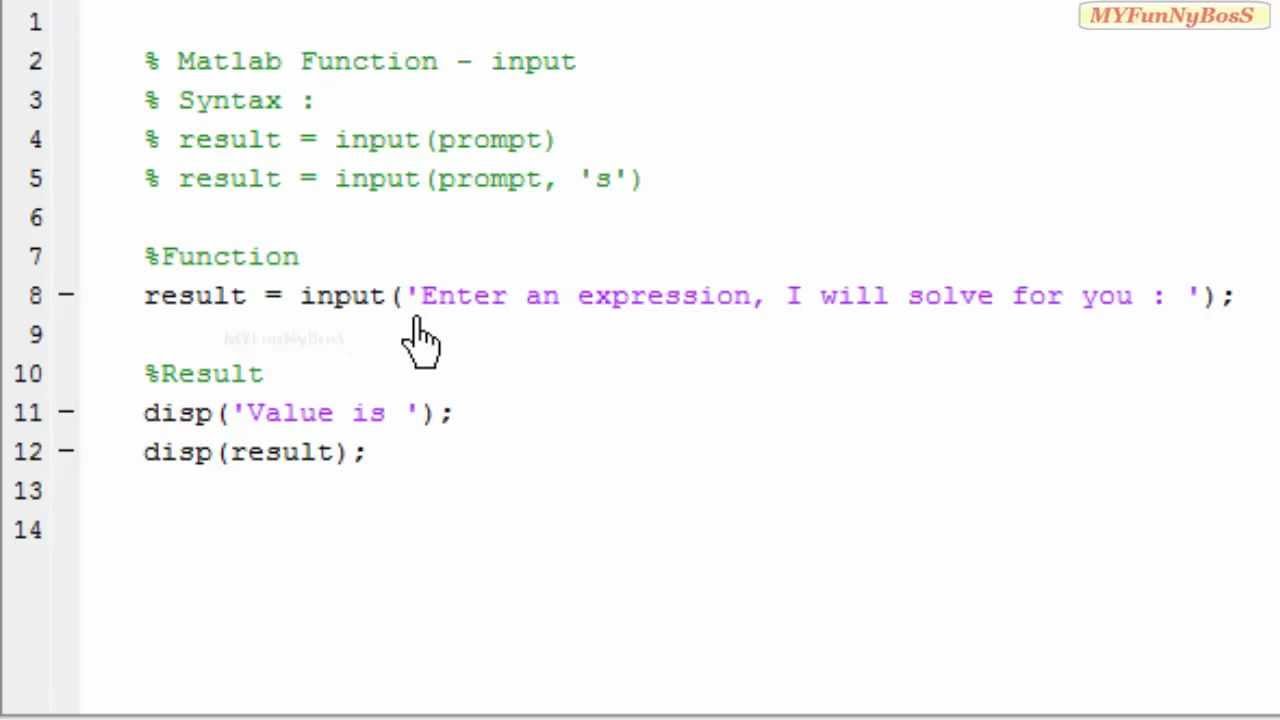

I distinctly remember sitting in my data compression class and talking about the great information theorist Claude Shannon and Robert Fano, when suddenly my professor introduced a new kid to the mix: David Huffman. In fact, this was the method that got me into computational methods to begin with. For one thing, this will escape using an additional for loop for searching for a match as strcmp is very efficiently implemented, so the above code won't be O(n^2), but could be slightly better than quadratic.If there were ever a data compression method to take the world by storm, it would be Huffman encoding. Give the above a whirl and see if it performs any faster. mainly because I don't like using length. Also, I've changed the length call to numel. See this post by Shai Bagon for more details: Using i and j as variables in Matlab. Note that I've changed the loop counter i to idx because it has actually been shown that using i as a loop counter slows down your code by a slight amount. Therefore, we can change your code to do this. Therefore, if we only had one true value in the logical vector with the rest of the elements being false, this means that we would get just the corresponding element we desire and nothing else. Logical indexing works by supplying a logical vector that is the same length as the vector of interest and it retrieves those values whose corresponding positions are true. Therefore, we can use this logical array output to index the codebook directly to retrieve the corresponding code you want. The output will be an array that is the same size as the cell array of strings and give you a logical true if the input string matches a position in the cell array and false otherwise.īecause your Huffman dictionary will contain a unique set of characters, you will only get one possible match per character. strcmp has the ability to take an individual string and compare itself to a cell array of strings. I'm assuming that each character in your cell array is represented as a one character string. In DEFLATE, there are up to 30 values for the distance codes (the D-tree), but there are up to 286 values for the Literal-Length codes (the LL-tree), so in general the time for the LL-tree generation is largest. What you can do is use strcmp to compare strings. The time needed to compute the codes is a function of how many non-zero weights there are. However, I can provide some suggestions to help speed things up. Because you're using cell arrays, that's going to be inherently slow so you have no choice but to iterate over each cell.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed